Many AI agent products now talk about memory: long-term memory, project memory, user preferences, chat history, knowledge bases, vector retrieval, and automatic summaries.

That makes it easy to believe one simple idea: the more an agent remembers, the smarter it becomes.

For engineering agents, I think the opposite is closer to the truth.

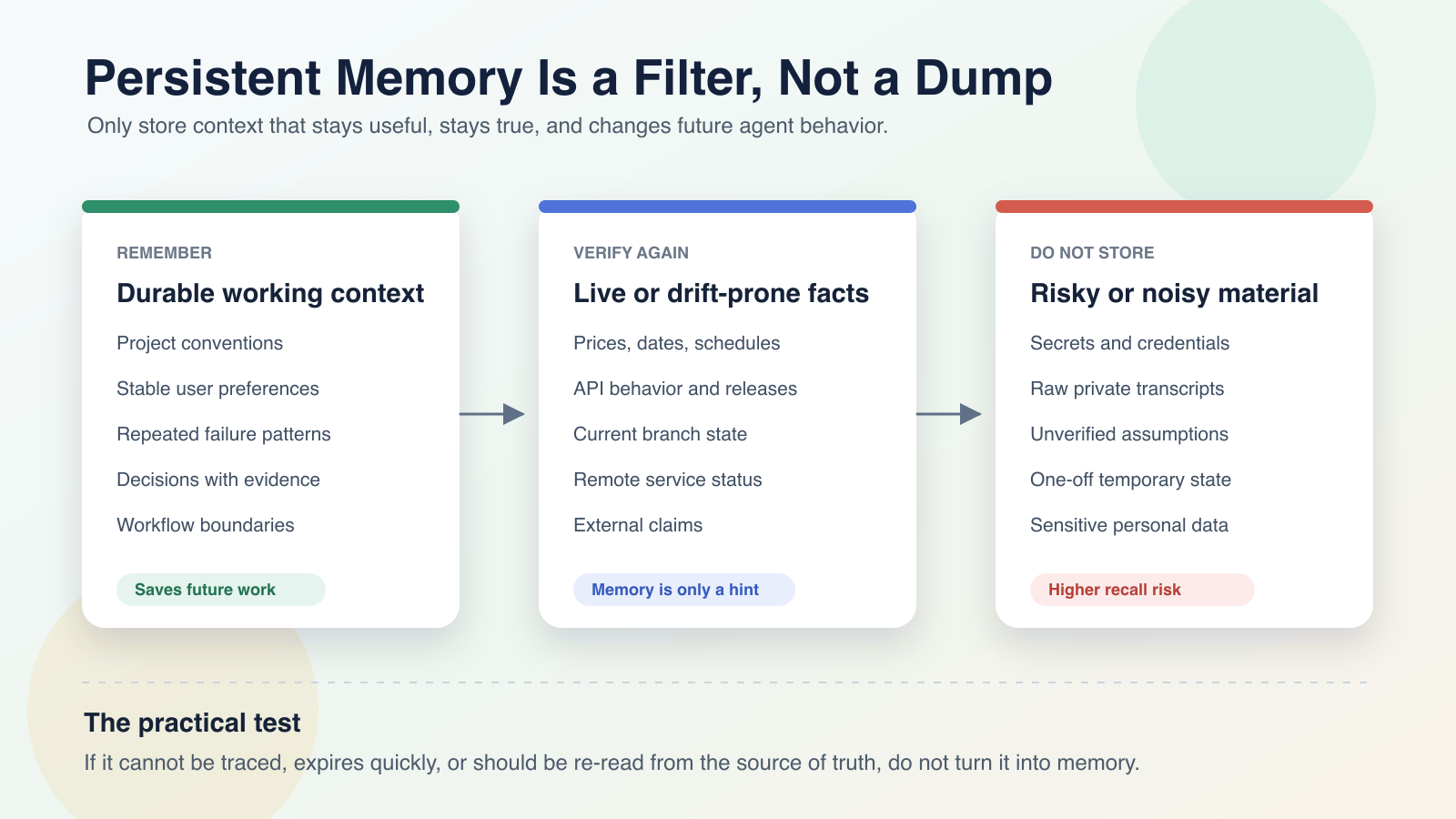

Persistent memory should not be a dumping ground for chat history. It should not mean putting every conversation, file, and assumption into a vector database. It should act more like a filter: only stable, reusable context that can improve future behavior deserves to survive.

Otherwise, memory becomes noise. The agent may treat stale facts as current, turn one-off instructions into permanent rules, preserve unverified assumptions, or carry sensitive context into future tasks.

The Short Version

An agent should remember information that:

- will likely be useful again;

- will not expire quickly;

- reduces repeated explanation;

- changes the agent’s default behavior;

- has a source, a scope, and a way to be verified.

It should not turn these into long-term memory:

- secrets, passwords, tokens, cookies, recovery codes;

- temporary branch or workspace state;

- prices, schedules, versions, policies, and other volatile facts;

- guesses without evidence;

- raw private transcripts;

- one-off emotions, preferences, or temporary instructions.

In short: memory is an index, not an archive. It is working policy, not raw logs.

Why Remembering More Can Make Agents Worse

For a chat assistant, remembering your name, tone, or writing preference is usually harmless. Engineering agents are different. They read code, edit files, run commands, call MCP servers, control browsers, access internal tools, and sometimes participate in release workflows.

When memory quality is poor, the blast radius becomes much larger.

For example:

- the agent remembers that a project uses

pnpm, but the project later migrates tobun; - it remembers that the

releasebranch can deploy directly, but the rule later changes to require parity withorigin/release; - it remembers that an API returns

groupName, while the real source of truth changed todisplayName; - it remembers that the user skipped tests once, then applies that shortcut to a risky backend change;

- it stores a temporary SQL query from a debugging session and treats it as a general diagnostic path.

These are not model intelligence problems. They are memory lifecycle problems.

OpenAI’s memory documentation separates saved memories from reference chat history and emphasizes user controls such as managing, deleting, and disabling memory. Claude Code also separates memory by scope through files such as CLAUDE.md. The underlying lesson is important: useful memory needs boundaries.

Engineering agents should follow the same principle.

Three Kinds of Context

I like to separate agent context into three layers.

1. Current Task Context

This is short-lived.

Examples:

- the user’s latest request;

- the current git diff;

- terminal output from this turn;

- the current browser page state;

- logs found during this debugging session;

- the active plan and checklist.

This context is important while the task is active, but most of it should not become long-term memory. After the task is done, it should either be captured in code, documentation, issues, commits, or simply disappear.

If every task scene becomes memory, future tasks will be polluted by old state.

2. Project Source of Truth

This is the context agents should trust first.

Examples:

AGENTS.md/CLAUDE.md;README.md;Makefile;- CI configuration;

- schema and migrations;

- package catalogs;

- API type definitions;

- tests;

- project design documents.

These files may not be called memory, but for an agent they are the most important verifiable memory.

If a memory conflicts with the repository, the repository should win. Memory may point the agent toward the right file, but it should not replace the file.

3. Long-Term Working Memory

This is what persistent memory should store.

It captures cross-task knowledge that is stable enough to reuse but may not yet belong in project files.

Examples:

- the user prefers Chinese by default;

- this repository publishes paired

.en.mdarticles for new Chinese articles; - the

publicdirectory is often dirty, so article validation should avoid writing into it; - for certain UI changes, the user explicitly does not want unrelated screens touched;

- a deployment script must preserve

linux/amd64because the local machine is macOS on arm64; - for debugging tasks, inspect logs first instead of guessing fixes.

The value of this layer is to reduce repeated setup and keep future work inside known boundaries.

What Should Agents Remember?

This is the table I use.

| Keep | Example | Why |

|---|---|---|

| Stable preferences | Default language, concise answers, conclusion first | Changes collaboration style |

| Project conventions | Articles live in content/article/; English uses the same slug plus .en.md | Reduces repeated discovery |

| Hard constraints | Do not change backend contracts; do not touch unrelated UI; avoid writing to public | Prevents real regressions |

| Repeated failure patterns | A known install issue came from cache permissions; a slow deploy required checking workflow logs | Speeds up debugging |

| Confirmed business rules | Collaborators can add or subtract points but cannot edit student data | Prevents product rule drift |

| Approved direction | A specific UI direction was accepted and future work should iterate from it | Preserves continuity |

| Reusable workflows | Validate articles with hugo --renderToMemory --minify | Improves delivery consistency |

These are not historical details. They are future defaults.

A good memory should answer this question:

Will this make the agent behave more correctly next time?

If not, do not store it.

What Should Be Verified Again?

Some information is useful as a clue but unsafe as a fact.

Examples:

- latest API behavior;

- current prices and plans;

- GitHub Actions status;

- whether a remote branch has changed;

- whether a model is still available;

- laws, policies, and platform rules;

- release dates and schedules;

- production service status.

These facts can expire even if they were correct last time.

So memory should say:

Last time, the release slowdown was traced to the image build step.

For similar deploy issues, first inspect the current workflow and recent Actions logs.It should not say:

Deploys are slow because of image builds.The first version is a reusable diagnostic clue. The second is a stale conclusion waiting to happen.

What Should Never Be Stored?

The biggest danger is not that memory is incomplete. It is that memory contains material it should not contain.

1. Secrets and Credentials

This includes:

- API keys;

- tokens;

- cookies;

- SSH private keys;

- database passwords;

- payment system secrets;

- recovery codes;

- one-time verification codes.

These should not enter memory, articles, logs, screenshots, or issues.

2. Raw Private Content

Do not store large chunks of email, customer data, order records, student information, medical information, financial records, or private conversation transcripts.

If context is needed, compress it into a non-sensitive rule:

For education SaaS work, the user cares strongly about teacher collaboration permissions and parent-facing visibility boundaries.Do not preserve the raw personal data behind that rule.

3. Unverified Guesses

Agents form hypotheses during debugging. Hypotheses belong in the current task, not long-term memory.

Only conclusions backed by code, logs, tests, or production verification deserve to survive.

4. One-Off State

Examples:

- the current temporary branch;

- a port that happened to be busy today;

- a file generated during this task;

- the fact that this specific task skipped tests;

- the fact that a page was open in a specific Chrome tab.

These should remain local to the active session.

A Simple Test Before Saving Memory

Ask five questions:

- Will this be useful next time?

- Will it probably remain true a month from now?

- Will it change the agent’s default behavior?

- Can it be traced or re-verified?

- If it is wrong, will it create risk?

Only save the memory if the first four answers are mostly yes and the fifth risk is acceptable.

If something is useful but likely to drift, write it as a place to check next time, not as a permanent fact.

If something is high risk, do not solve it with memory. Put it in permissions, CI guards, tests, docs, or human approval workflows.

How Should Memory Be Written?

Good memory is short, stable, and operational.

Bad:

The user asked us to update an article, public was dirty, then we ran Hugo and it seemed okay.Better:

For silenceper.com article work, source articles live in content/article/; English articles use the same slug plus .en.md; validate with hugo --renderToMemory --minify to avoid writing into public.The better version:

- removes process noise;

- keeps future behavior;

- names the repository scope;

- includes concrete paths and commands;

- avoids treating temporary state as truth.

Where Should Different Information Live?

Not every durable fact belongs in the same memory system.

| Information type | Better home |

|---|---|

| Project rules | AGENTS.md / CLAUDE.md |

| Build and deploy commands | README.md / Makefile / CI |

| Business rules | Code, tests, product docs |

| Personal preferences | User-level memory |

| Repository conventions | Project-level memory |

| Reusable procedures | Skill / runbook |

| Active task state | Current session / issue / PR description |

| Volatile facts | Re-check the source every time |

A mature agent workflow should not rely on memory alone.

The better structure is:

memory points the agent in the right direction

repo files define the rules

tests prevent regressions

logs reconstruct the scene

human approvals guard risky actionsThat way, even if memory is incomplete, the agent can recover by reading the source of truth.

The Real Value of Persistent Memory

Persistent memory is not valuable because it makes an agent remember everything.

It is valuable because it helps the agent avoid repeated mistakes.

For example:

- it does not ask every time whether to use Chinese or English;

- it does not reverse a business rule that was already settled;

- it does not repeat the same deploy mistake;

- it does not bring back a UI direction the user rejected;

- it does not modify unrelated files in a dirty worktree;

- it does not answer plan or SKU questions from memory when the code should be checked.

These are not dramatic AGI abilities. They are exactly the things that make engineering collaboration smoother.

The productivity gain comes from remembering fewer things more accurately, not from storing more fragments.

My Recommendation

If you use Codex, Claude Code, Cursor, or similar coding agents heavily, manage memory with these rules:

- Treat memory as an index, not the source of truth.

- Put stable rules in project files.

- Re-check volatile facts from live sources or the repository.

- Keep sensitive material out of memory.

- Write each memory as future behavior, not historical narration.

- Regularly prune stale, duplicate, and vague memories.

The goal is not to make the agent remember everything. The goal is to make it interrupt you less, drift less, and repeat fewer mistakes.

When memory becomes a maintainable engineering asset instead of accumulated chat residue, an agent starts to feel like a reliable long-term collaborator.